Assess and mitigate risks in the use of data

“Most of the reasons for not sharing data were not about ‘We don’t believe that we can do something with our data’, but about ‘We are not prepared’, ‘We do not want to take the risk’, or ‘The legal office will not sign.”

Business & technology advisor, Data Pitch

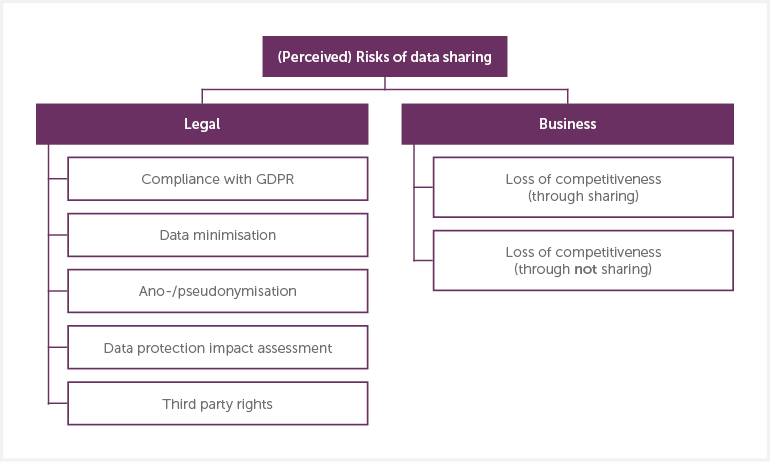

An assessment of the risks of engaging in a data sharing process is required for all stakeholders in a data sharing relationship, but most important for data holders, as they are accountable for the use of the data that they share. There are several reasons why organisations do not share data. Two of the most common are: the legal compliance and associated required processes of sharing data; and the competitive advantage that could be lost or gained in the market through data sharing.

Legal Risk

No data can or should be shared without ensuring legal compliance, and conducting a legal risk assessment. Initially, this requires organisations wanting to share data to be familiar with the legal framework that applies to them. For data shared in Europe, this will be the General Data Protection Regulation (GDPR). With its introduction, the potential repercussions for breaches of privacy and data protection can amount to up to 20 million Euros, or 4% of global annual turnover.

However, done well, data sharing need not be a risky activity. To the contrary, the potential risk of sharing data should be balanced against the organisational risk of not realising the value of the data. The cost of risk mitigation may be comparatively small, if the potential benefits are significant efficiency savings, or a new product in a competitive market.

Data protection impact assessments (DPIAs) are mandatory for personal data processing that “is likely to result in a high risk to the rights and freedoms of natural persons” (Article 35 of the GDPR). Even when this is not the case, they are still best practice.

Data subjects are living individuals that are identified or identifiable through a specified data set i.e. personal data (as defined by Article 4(1) of the GDPR). One way in which a dataset can relate to data subjects is through its content. In some cases, it is extremely obvious that data relate to data subjects – e.g. when the data contains information about Maria Smith, such as her name, date of birth, and contact details. A dataset can also relate to data subjects through the purpose for its processing (e.g. to learn something about or evaluate an individual), or the result of its processing (i.e. it is likely to have an impact on the rights and freedoms of data subjects).

In many instances of data sharing, the data shared is or could become personal data, and the same dataset can be considered as non-personal or personal under different circumstances. Furthermore, there is a risk that anonymised data may be re-identified.

Other legal questions that need to be addressed are that of consent, and of third party rights to the data. If the data was collected from a natural person, even if they are no longer identifiable, did the data holder obtain consent at the time of collection for the data to be used for the intended purpose? Is it a purpose that the original person could reasonably believe their data might be used for? Finally, in order to be able to share a dataset, data holders must ensure that they have appropriate license over the data to do so. There should be no additional third party rights, such as others’ intellectual property contained in the data. While these might seem onerous, establishing clear answers greatly facilitates successful data sharing.

Some of the questions data holders should consider in this context are:

- Is personal data being shared?

- Has the data been anonymised or pseudonymised?

- Have data minimisation principles been applied?

- Are the safeguards and measures in place adequate to control the flow of these data?

- Is the data processing considered high risk? Is a DPIA required?

- Which data subjects’ rights are concerned, and what usage of their data have affected data subjects consented to?

A note on data protection

The legal and privacy toolkits developed by Data Pitch can help work through these considerations, and provide guidance on:

- The types of data that are likely to fall within and outside the scope of the GDPR.

- The types of data processing activities that are considered as high-risk under the GDPR.

- The types and levels of measures that are required to control the flow of data.

- The basics of data flow mapping as an approach to he creation of data situation models – to be used as part of anonymisation assessments.

Business risks

Putting aside data that sends anti-competitive commercial signals to the market, there are other potential business risks in sharing data. There may be a risk of losing a competitive advantage when data is shared. Sharing data always implies a potential loss of control over the data, as data leaves organisational boundaries. On the other hand, there is also a risk associated with not sharing data, and foregoing opportunities to develop and gain a competitive advantage in the process. This is a strategic business decision: that continuous development is necessary to stay competitive, and data sharing is a suitable and cost-effective way of doing this.

The more competitive a domain is, the higher is the perception of risk when data is allowed to leave the boundaries of the organisation. Whether data sharing affects the competitiveness of an organisation – one way or the other – will largely depend on the trustworthiness of the data users it is shared with and how that trust is structured. An assessment of the potential of data sharing, and an appropriate vetting of data users, are key to risk minimisation. In order to ensure that data users are trustworthy, data holders should conduct sufficient due diligence checks.

Another risk is a failure in the processing of the data, which might in turn be caused by insufficient quality or quantity of data. As above, the release of a sample or subset can assist with this. Making sample data available also helps to make the engagement between data holders and data users more fruitful, as questions can be raised early, and data or solutions can be refined to accommodate them. Finding out that more data is needed, or the data itself needs extensive pre-processing before work can commence, can be a significant barrier. Continuous discussions between data holder and user can help mitigate this risk.

A note on pre-processing

Pre-processing, especially on large, unstructured data sets, can consume extensive time and resources. It is important to establish who will be carrying out this work as part of the data sharing arrangement. Depending on the amount, quality and status of the source data, this may be a substantial amount of work, which should be estimated and costed upfront. In a Data Pitch survey, data users described that low data quality and required data preparation were the biggest and most underestimated challenges they experienced.

Key resources:

Legal and privacy toolkit (v1) (Stalla-Bourdillon & Knight, 2017): A guide that focuses on the critical things to consider when sharing and reusing data for defined innovative purposes under the Data Pitch programme. Including an overview of the legal and regulatory framework that applies to data sharing and data reuse, and a risk mitigation strategy for the secondary use of data.

https://datapitch.eu/privacy-toolkit-v1/

Legal and privacy toolkit (v2) (Stalla-Bourdillon & Carmichael, 2018): A guide on the basics of mapping data flows as an effective and practical approach to the creation of data situation models for anonymisation assessment and GDPR compliance.

https://datapitch.eu/privacy-toolkit-v2/

Data protection by design: Building the foundations of trustworthy data sharing (Stalla-Bourdillon et al., 2019): This paper suggests a common workflow to embed data protection by design within data sharing practice.

https://dx.doi.org/10.5281/zenodo.3079895

Anonymous data v. personal data—a false debate: an EU perspective on anonymisation, pseudonymisation and personal data (Stalla-Bourdillon & Knight, 2017): This paper discusses the benefits and challenges of data anonymisation.

https://eprints.soton.ac.uk/400388/

European Commission regulation on the free flow of non-personal data (European Commission, 2019c)

https://ec.europa.eu/digital-single-market/en/free-flow-non-personal-data